Outline

- Bayes’ Theorem

- Basic Form of Bayes Theorem

- Comparative Forms of Bayes’ Theorem

- Four Ways to Calculate Probabilities Using Bayes’ Theorem

- Testing Whether You’re Sick

- Comparative Forms of Bayes Theorem

- Derivation of Bayes Theorem

Bayes’ Theorem

- Bayes’ Theorem quantifies the idea that the probability of a hypothesis based on the evidence depends on four factors:

- how well the hypothesis predicts the evidence,

- how surprising the evidence is apart from the hypothesis,

- how likely the hypothesis is apart from the evidence,

- how likely competing hypotheses are based on the evidence.

- The simplest example of Bayes’ Theorem:

- Two coins are on a table, both heads up. One is a regular coin, the other is double-headed. You don’t know which is which. You randomly select one of the coins and, without looking, flip it. It lands heads.

- Before flipping, the probability you selected the double-headed coin was 1/2.

- That the coin landed hands makes it more likely the coin you selected is double-headed. According to Bayes’ Theorem, the probability is 2/3.

- The math:

- Let:

- D = the hypothesis that the coin you selected is the double-headed coin;

- S = the hypothesis that the coin you selected is the regular single-headed coin;

- E = the coin landed heads (the evidence).

- Prior Probabilities (after you selected the coin but before you flipped it)

- P(D) = 1/2 and P(S) = 1/2.

- Likelihoods (the probability of evidence E given each hypothesis)

- P(E|D) = 1

- the probability that the coin lands heads given that it’s double-headed = 1

- P(E|S) = 1/2

- the probability that the coin lands heads given that it’s single-headed = 1/2

- P(E|D) = 1

- Probability of the Evidence

- P(E) = 3/4

- Why the probability is 3/4:

- The probability that the coin you flipped is double-headed is 1/2, in which case you’re guaranteed heads.

- The probability that the coin you flipped is singled-headed is 1/2, in which case the probability of heads is 1/2.

- So the probability of heads = 1/2 + (1/2 x 1/2) = 3/4.

- Or, in symbols, P(E) = P(D) P(E|D) + P(S) P(E|S)

- Why the probability is 3/4:

- P(E) = 3/4

- Bayes’ Theorem (Basic Form)

- P(D|E) = P(D) P(E|D) / P(E)

- Posterior Probability (the probability the coin is double-headed based on evidence E)

- P(D|E) = 1/2 x 1 / (3/4) = 2/3

- Let:

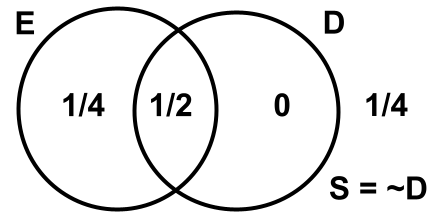

A Venn Diagram for the example, where S = ~D:

- So:

- P(D) = 1/2 + 0 = 1/2

- P(S) = P(~D) = 1/4 + 1/4 = 1/2

- P(E) = 1/4 + 1/2 = 3/4

- P(D|E) = P(D&E) / P(E) = (1/2)/(3/4) = 2/3

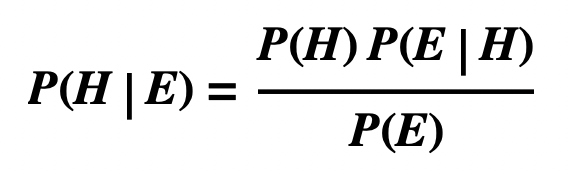

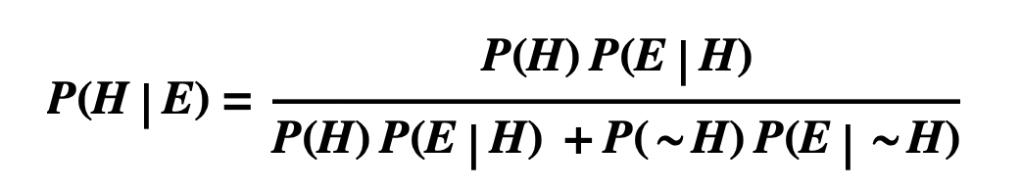

Basic Form of Bayes Theorem

- The basic form of Bayes’ Theorem:

- where:

- H is the hypothesis under consideration

- E is evidence for or against H

- P(H) is the (prior) probability of H apart from E

- P(E) is the probability of E apart from H.

- P(E|H) is the likelihood of E given H

- P(H|E) is the (posterior) probability of H given E.

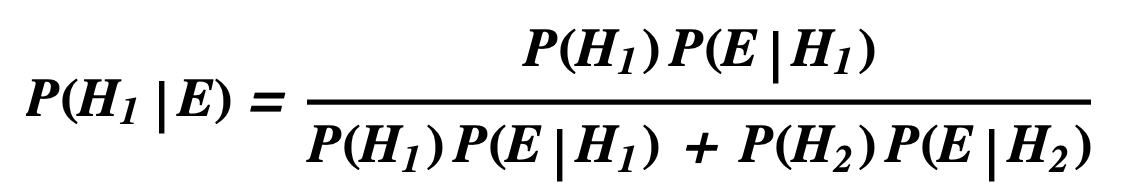

Comparative Forms of Bayes’ Theorem

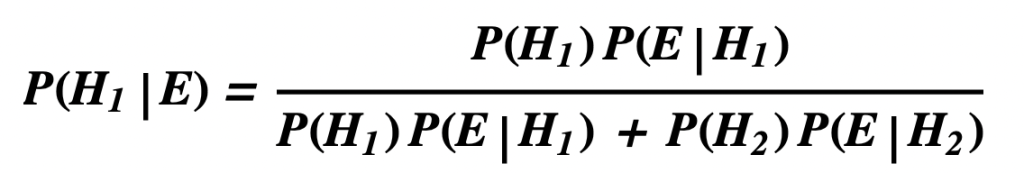

- Various comparative forms of Bayes’ Theorem can be derived from the basic form by spelling out P(E) in terms of competing hypotheses. For example, if P(E) = P(H1) P(E|H1) + P(H2) P(E|H2), the result is Bayes’ Theorem for two hypotheses.

- where:

- H1 is the hypothesis under consideration

- H2 is a competing hypothesis

- H1 and H2 are mutually exclusive and jointly exhaustive

- that is, P(H1 & H2) = 0 and P(H1 v H2) = 1

- E is evidence for or against H1 and H2

Four Ways to Calculate Probabilities Using Bayes’ Theorem

- Green Cabs and Blue Taxis

- 85 percent of taxis in a city are Green Cabs. The other 15 percent are Blue Taxis. A taxi sideswiped another car on a misty winter night and drove off. A witness testified the taxi was blue. The witness is tested under conditions like those on the night of the accident and she correctly identifies the color of the taxi 80% of the time. What’s the probability the sideswiper was a Blue Taxi?

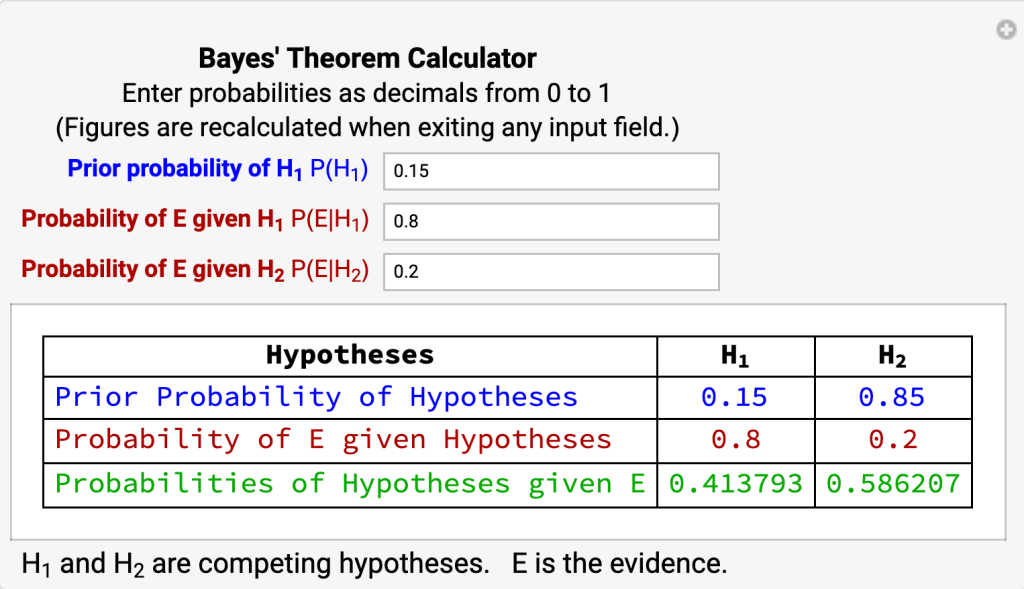

Using a Bayesian Calculator

- H1 = the taxi is blue

- H2 = the taxi is green

- E = the witness testifies the taxi is blue.

Using a Probability Tree

Using a Venn Diagram

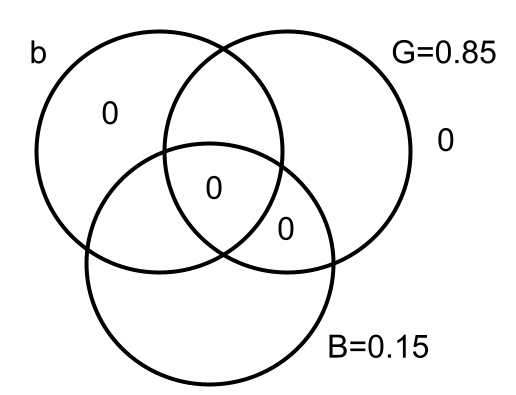

Initial Diagram

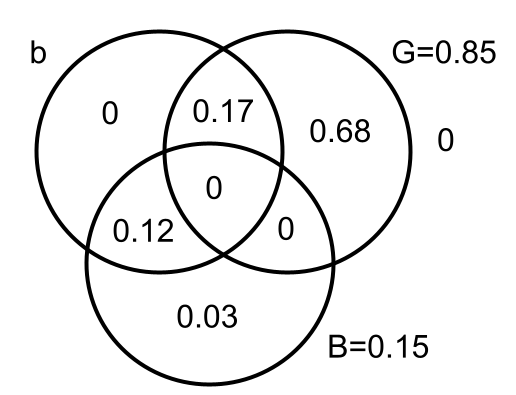

Completed Diagram

- Calculating probabilities for the zones of the Venn Diagram

- Initial Venn Diagram

- Enter zeroes in four zones signifying that:

- P(G&B) = 0

- P(GvB) = 1

- Enter probabilities for circles G and B:

- G = 0.85

- B = 0.15

- Enter zeroes in four zones signifying that:

- Completed Venn Diagram

- Use P(b|B) = 0.8 and P(B) = 0.15 to calculate:

- P(b&B&~G) = 0.12

- P(B&~b&~G) = 0.03

- Use P(b|G) = 0.2 and P(G) = 0.85 to calculate:

- P(b&G&~B) = 0.17

- P(G&~b&~B) = 0.68

- Use P(b|B) = 0.8 and P(B) = 0.15 to calculate:

- So:

- P(G) = 0.17 + 0.68 = 0.85

- P(B) = 0.12 + 0.03 = 0.15

- P(b) = 0.17 + 0.12 = 0.29

- P(b|B) = P(b&B) / P(B) = 0.12 / (0.12 + 0.03) = 0.8

- P(B|b) = P(b/B) x P(B) / P(b) = 0.8 x 0.15 / 0.29 = 0.41

- Initial Venn Diagram

Using Bayes’ Formula

- P(B|b) = P(b/B) x P(B) / ( P(b/B) x P(B) + P(b|G) x P(G) )

- B = the taxi is blue

- G = the taxi is green

- b = the witness testifies the taxi is blue.

- P(B) = 0.15

- P(b/B) = 0.8

- P(G) = 0.85

- P(b|G) = 0.2

- Therefore, P(B|b) = (0.8 x 0.15) / (0.8 x 0.15 + 0.2 x 0.85) = 0.41.

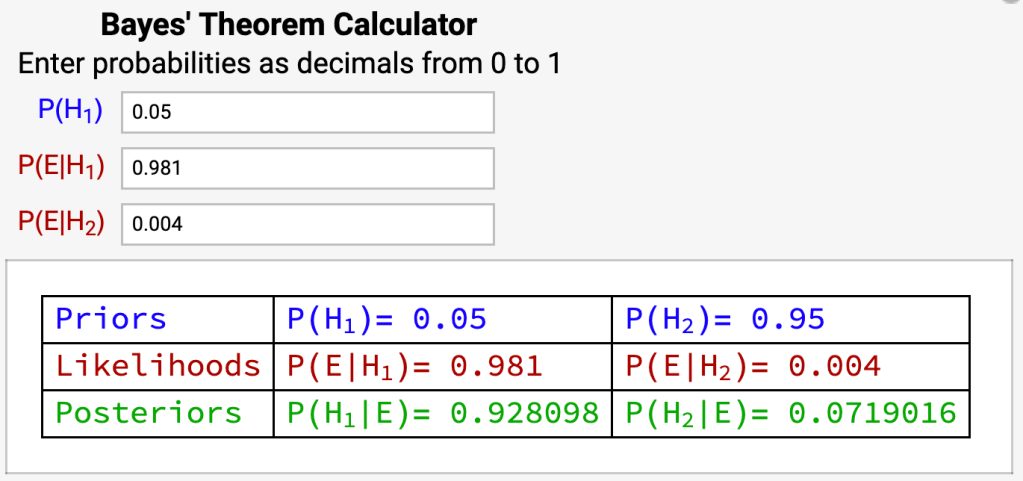

Testing Whether You’re Sick

Probability You’re Sick if You Test Positive

View Sensitivity, Specificity, Positive and Negative Predictive Values

- Question

- You test positive for a certain kind of infection. What’s the probability you’re sick, i.e. P(sick | positive)?

- Given

- Sensitivity of the test = P(positive | sick) = 98.1%

- Specificity of the test = P(negative | not sick) = 99.6%.

- Prevalence of disease in the population = P(sick) = 5%

- Calculate

- P(sick | positive) = ?

- Let:

- H1 = You’re sick

- H2 = You’re not sick

- E = You test positive

- Prior Probabilities, based on prevalence:

- Prior probability of H1 = P(H1) = 0.05

- Prior probability of H2 = P( H2) = 0.95

- Likelihoods of E given the hypotheses:

- Probability of E given H1 = P(E|H1) = 0.981

- based on the sensitivity of the test

- Probability of E given H2 = P(E|H2) = 1 – 0.996 = 0.004

- based on the specificity of the test

- Probability of E given H1 = P(E|H1) = 0.981

- Posterior Probabilities:

- Probability of H1 given E = P(H1|E) = 0.928

- Probability of H2 given E = P(H2|E) = 0.072

- Therefore:

- P(sick | positive) = P(H1|E) = 92.8%

Probability You’re Not Sick if You Test Negative

- Question:

- You test negative for a certain kind of infection. What’s the probability you’re not sick, i.e. P( not sick | negative)?

- Given:

- Sensitivity of the test = P(positive | sick) = 98.1%

- Specificity of the test = P(negative | not sick) = 99.6%.

- Prevalence of the disease in the population = P(sick) = 5%

- Calculate:

- P(not sick | negative) = ?

- Let:

- H1 = You’re sick

- H2 = You’re not sick

- E = You test negative

- Prior Probabilities, based on prevalence:

- Prior probability of H1 = P(H1) = 0.05

- Prior probability of H2 = P( H2) = 0.95

- Likelihoods of E given the hypotheses:

- Probability of E given H1 = P(E|H1) = 1 – 0.981 = 0.019

- based on the sensitivity of the test

- Probability of E given H2 = P(E|H2) = 0.996

- based on the specificity of the test

- Probability of E given H1 = P(E|H1) = 1 – 0.981 = 0.019

- Posterior Probabilities:

- Probability of H1 given E = P(H1|E) = 0.001

- Probability of H2 given E = P(H2|E) = 0.999

- Therefore:

- P(not sick | negative) = P(H2|E) = 99.9%

View Random Drug Test

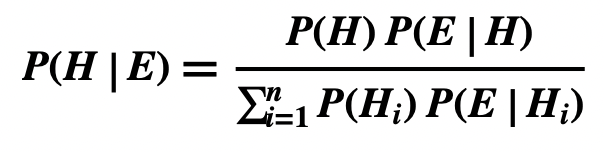

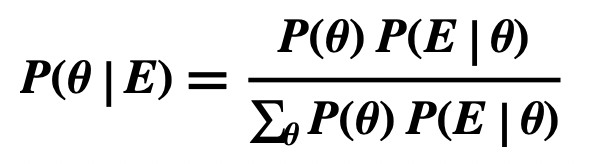

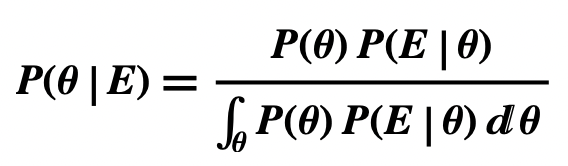

Comparative Forms of Bayes Theorem

Single Hypothesis, True and False

Two Hypotheses, Mutually Exclusive and Jointly Exhaustive

n Hypotheses, Mutually Exclusive and Jointly Exhaustive

Hypothesis is a Discrete Random Variable 𝞱

Hypothesis is a Continuous Random Variable 𝞱

Derivation of Bayes Theorem

Derivation of Basic Form

- P(H|E) = P(H&E) / P(E)

- Definition of Conditional Probability

- P(H|E) = P(H) P(E|H) / P(E)

- General Conjunction Rule

Derivation of Comparative Form (Single Hypothesis)

- The Comparative Form follows from the Basic Form because P(E) = P(H) P(E|H) + P(~H) P(E|~H)

- E is logically equivalent to E&H v E&~H

- So, P(E) = P((E&H) v (E&~H))

- Equivalence Rule

- So, P(E) = P(E&H) + P(E&~H)

- Special Disjunction Rule

- So, P(E) = P(H) P(E|H) + P(~H) P(E|~H)

- General Conjunction Rule